Unparalleled AI Computing Performance on Your Desk

NVIDIA GB300 Grace Blackwell Ultra Desktop Superchip

Up to 775 GB of Coherent Memory

Up to 20 PFLOPS AI Performance

ConnectX-8 SuperNIC

Nvidia DGX OS

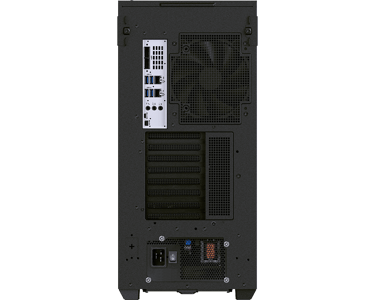

Model: FlexPrime V80B - DGX Workstation

The Most Powerful Deskside AI Performance Without Compromise

The Corsair PRO CS-GB300DGX1 Workstation based on NVIDIA DGX™ Station Station delivers unmatched deskside AI power, built on the groundbreaking NVIDIA GB300 Grace Blackwell Ultra Desktop Superchip and 775GB of coherent memory. Designed for large‑scale AI training and inference, it combines state‑of‑the‑art hardware with the NVIDIA AI Software Stack to give teams a turnkey, high‑performance platform for accelerated AI development—right at their deskside.

NVIDIA Grace Blackwell Ultra Desktop Superchip

Powered by the NVIDIA Blackwell Ultra GPU with next‑gen CUDA® and fifth‑gen Tensor Cores, connected to the NVIDIA Grace CPU via NVLink®‑C2C for maximum bandwidth and system performance.

ConnectX-8 SuperNIC

Delivers up to 800Gb/s of high‑efficiency network throughput, providing ultra‑fast connectivity optimized for hyperscale AI workloads and dramatically boosting performance for AI factory environments.

Fifth Generation Tensor Cores

NVIDIA DGX Stations leverage the latest Blackwell‑generation Tensor Cores to enable 4‑bit floating‑point (FP4) AI, allowing larger next‑gen models and higher performance while maintaining accuracy.

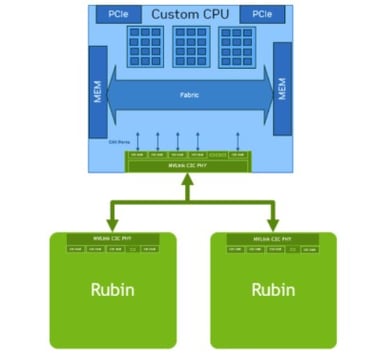

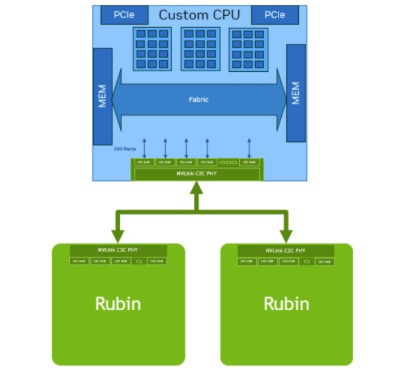

NVLink-C2C Interconnect

Extends NVLink to a high‑bandwidth, chip‑to‑chip interconnect between the CPU and GPU, enabling fast, coherent data transfer across processors and accelerators

Workload Optimized Power-Shifting

DGX Stations use AI‑driven system optimizations that dynamically allocate power based on active workloads, continually maximizing performance and efficiency.

Large Coherent Memory for AI

As AI models grow in size and complexity, the NVIDIA Grace Blackwell Ultra architecture enables them to train and run efficiently within a single large coherent memory pool. Its C2C superchip interconnect removes traditional CPU‑GPU bottlenecks, delivering fast, scalable performance for massive‑scale models

Nvidia AI Software Stack

Supports AI software stack supporting fine‑tuning, inference, and data science, enabling seamless development on the desktop and deployment to the cloud or data center using the same tools, libraries, frameworks, and pretrained models.

NVidia DGX OS

Enterprises running production workloads

Workstations with predictable upgrade cycles

AI/ML and data science environments

Cloud and container deployments

AI Development

With NVIDIA CUDA‑optimized libraries accelerating deep learning and machine learning workloads—and combined with DGX Station’s massive memory and high‑throughput superchip architecture—NVIDIA’s accelerated computing platform delivers a powerful AI development environment for applications ranging from predictive maintenance to medical imaging and natural language processing.

Data Science

With NVIDIA AI software—including RAPIDS™ open‑source libraries—GPUs deliver superior performance and lower infrastructure costs across end‑to‑end data science workflows. DGX Station’s large coherent memory pool enables massive datasets to be loaded directly into memory, eliminating bottlenecks and accelerating overall throughput.

Supercomputing AI and Data Science comes to Desktop with The latest Corsair PRO CS-WS300DGX1

AI Inference

As AI models grow in size and complexity, Corsair PRO DGX Stations accelerate local inference, delivering exceptional performance for large language model (LLM) token generation, data analysis, content creation, AI chatbots, and more.

Personal Cloud

Can function as a high‑performance deskside system for a single user running advanced AI models on local data, or as a shared compute resource for teams fine‑tuning and deploying custom models. With support for NVIDIA Multi‑Instance GPU (MIG), it can be partitioned into up to seven fully isolated GPU instances—each with dedicated memory, cache, and compute cores—and seamlessly scaled to larger MIG environments in the cloud or data center. This allows administrators to deliver consistent QoS across all workloads and extend accelerated computing to every user.

Model: FlexPrime V80B SPECIFICATION

Form Factor Full Tower Workstation Desktop

Processor NVIDIA Grace™ CPU Superchip with 72 Arm® Neoverse V2 cores

GPU Single NVIDIA Blackwell Ultra GPU

Memory Up to 775 GB of Coherent Memory

- CPU Memory Up to 496GB LPDDR5X | Up to 396 GB/s

- GPU Memory Up to 279GB HBM3e | 8 TB/s

NVLink-C2C 900 GB/s

Networking | Peak Bandwidth NVIDIA ConnectX®-8 SuperNIC | Up to 800 Gb/s | Ethernet

Ethernet Ports 2x QSFP 112 Ports with NVIDIA ConnectX-8

1x (RJ45 10GbE (Marvel ACQ113)

1x (1000Base-T dedicated out of band management port (connected to BMC)

MIG 7

PCIe Slots 1x Double-Wide PCIe 5.0 x 16 slots (for display adapter graphics card)

2x PCIe Gen 5 x16 (x8 electrical)

Storage 2TB + 2TB NVMe SSD M.2 Total Storage installed

1x PCIe 5.0 x4 NVMe M.2 ports (from NV Grace CPU)

4x PCIe 5.0 x4 U.2 NVMe bays (Internal, requires NVME HBA)

Server Management BMC Management Module with BMC

Rear I/O 4x USB3.1 Type A ports

1x Mini DP Port

1x 2Ch audio port

1x Micro USB COM port

Cooling | Thermal Solution Double 360 Radiator Closed loop liquid cooling

Targeting < 40dBA sound pressure at maximum workload @ 25 ambient

Power Supply (1) 1600W ATX 80 PLUS Titanium

Decoders 7 NVDEC

7 nv JPEG

Operating System Ubuntu 24.04 LTS